Intelligent Search

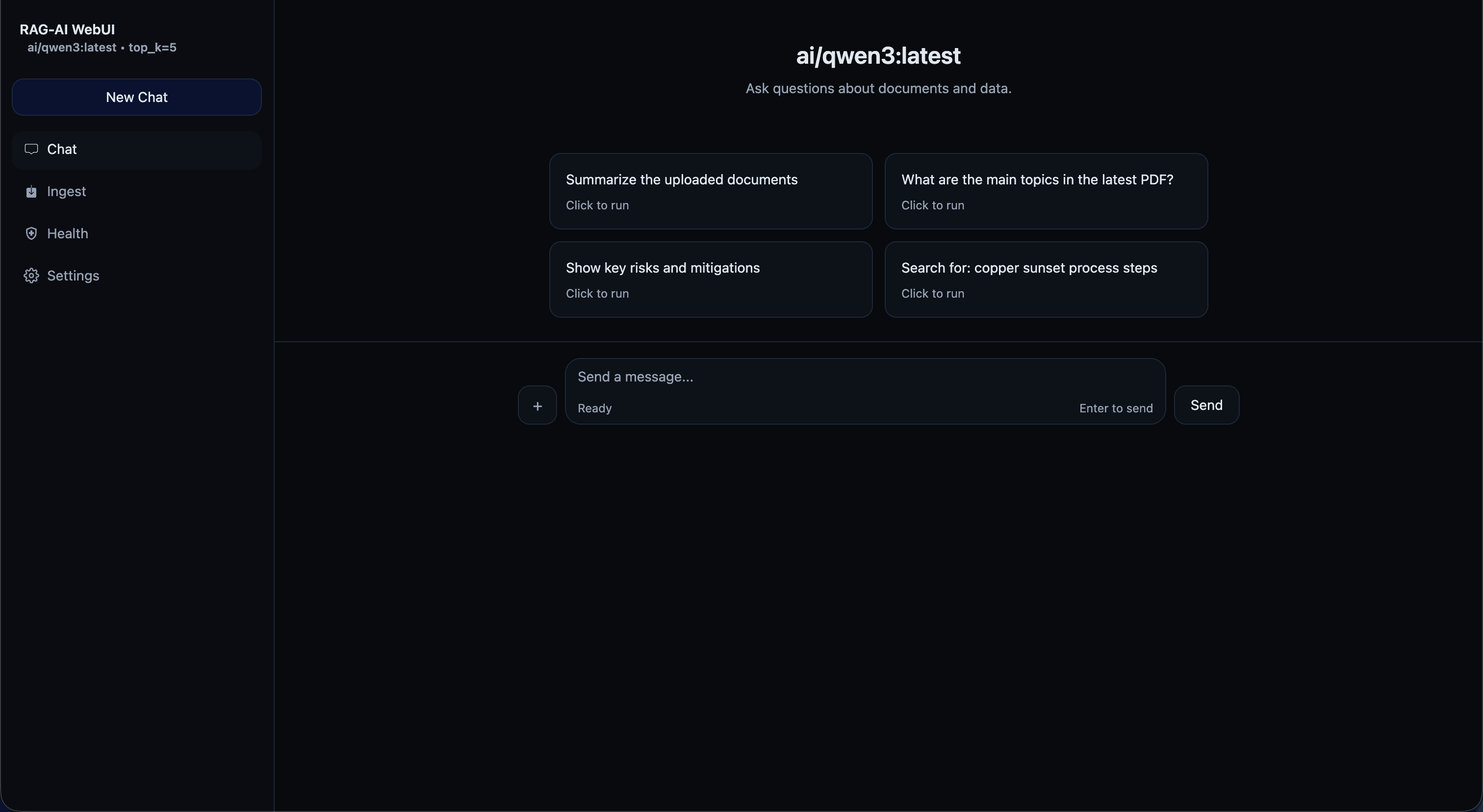

This project started as a practical need: make scattered documents (e.g. manuals, LLDs , reports, etc.) searchable and usable during day-to-day work.

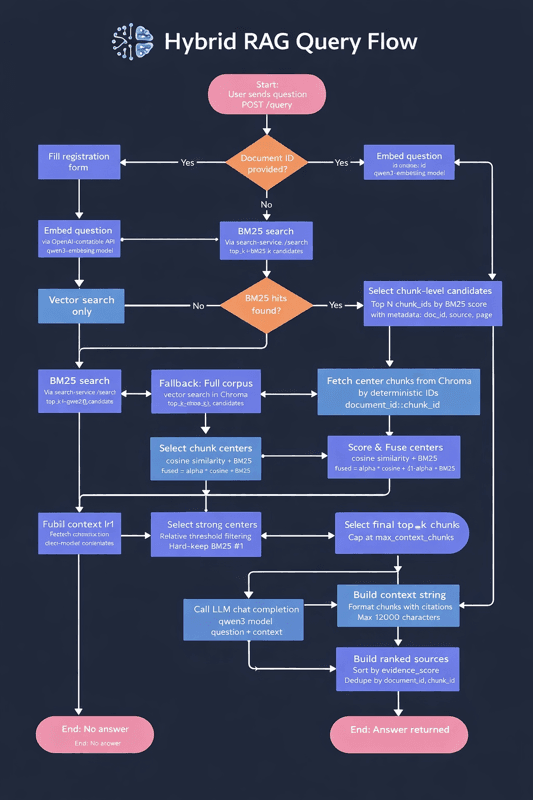

Solution is intelliSearch, ahybrid retrieval flow (BM25 + embeddings) with clean citations, and a structure that can scale from personal docs to team knowledge bases.

The result is a fast, explainable search experience that feels like an internal assistant—grounded, auditable, and easy to extend.

A hybrid RAG platform combining structured chunking, fast retrieval, and citation-ready answers—optimized for reliability and scale.

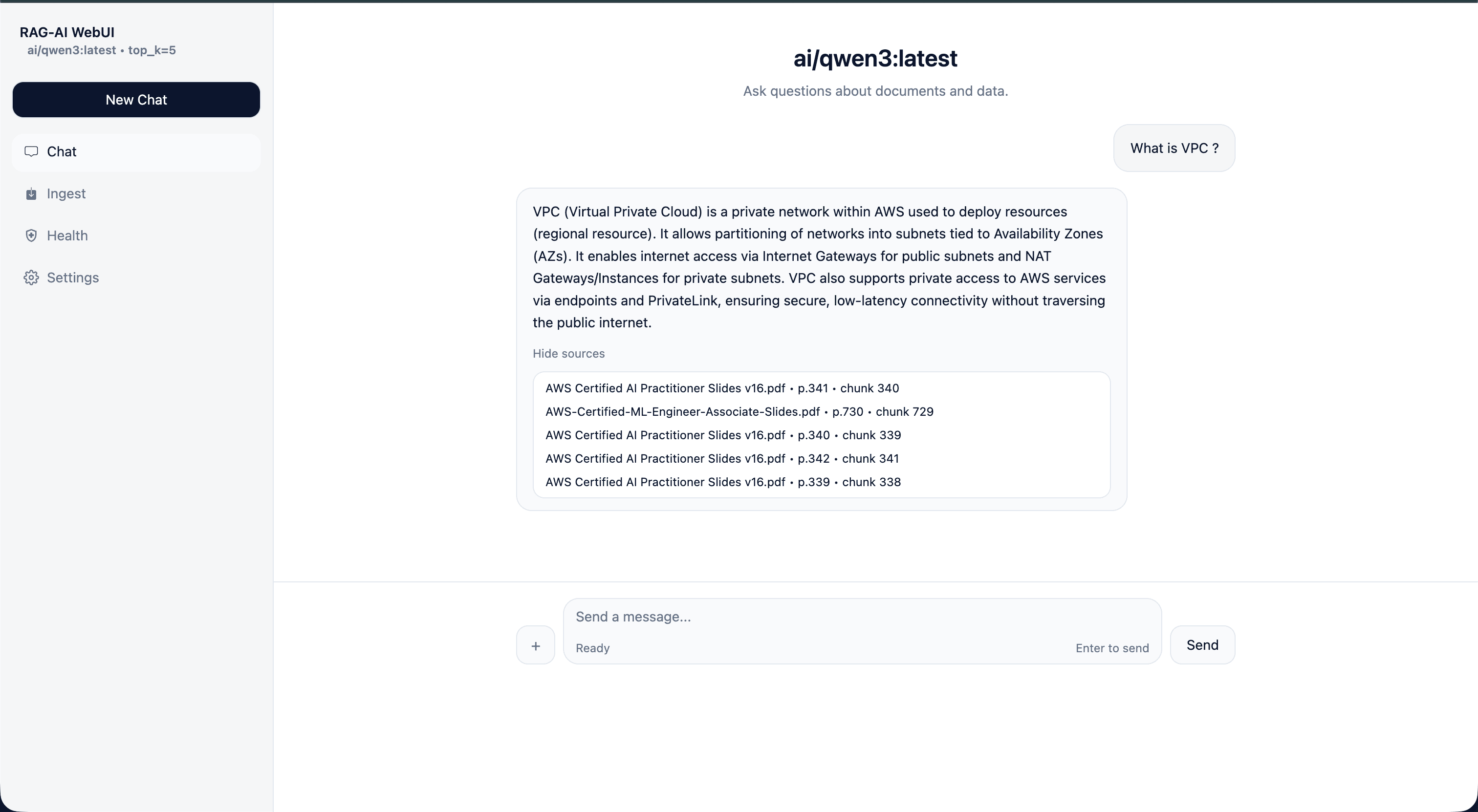

User submits question → Hybrid retrieval (BM25 + vector search) → Context building → LLM generates answer → Answer returned with citations

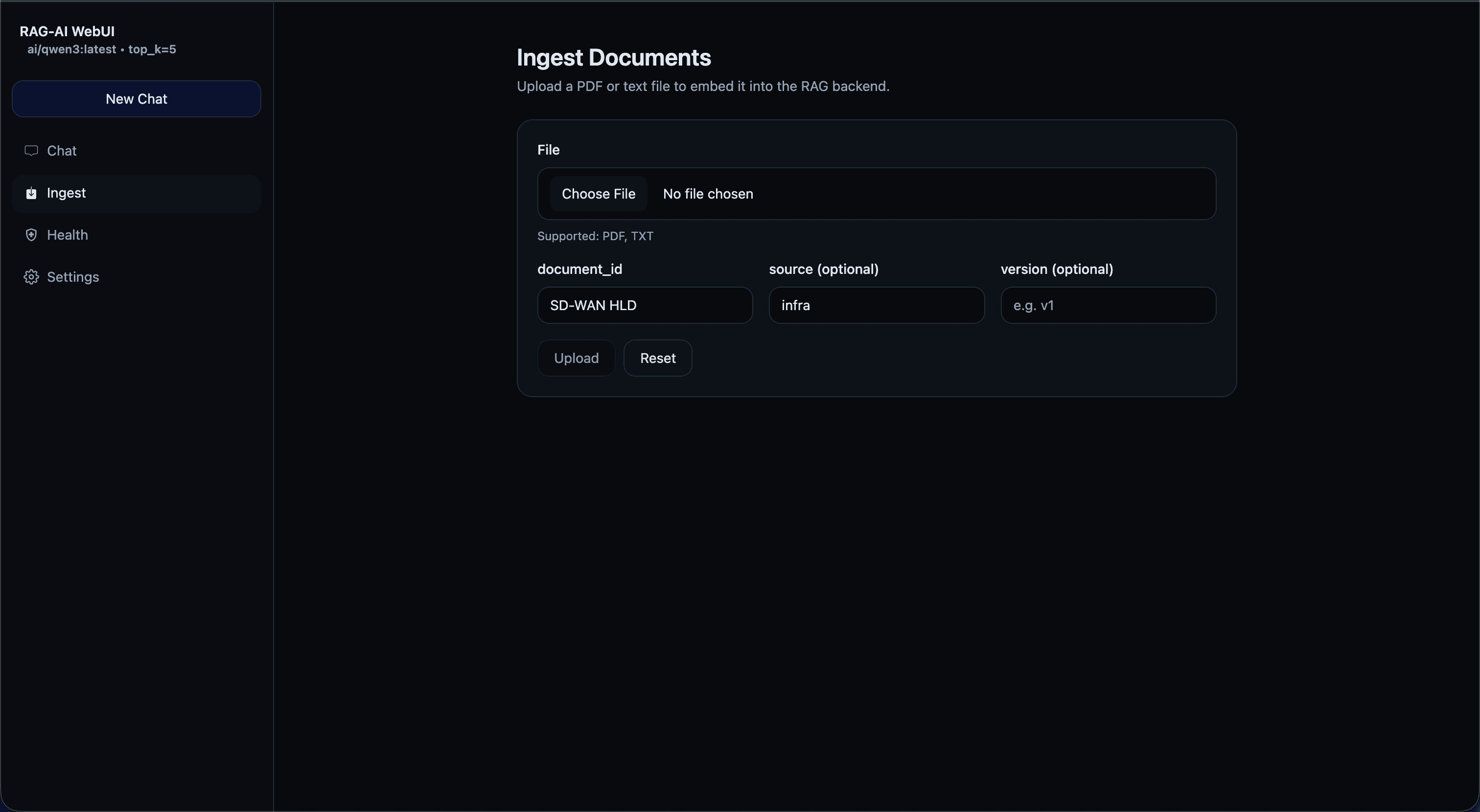

- Upload / ingest documents

- Chunking + embeddings + indexing

- Hybrid retrieval (BM25 + vector search)

- Rerank + build context + generate answer

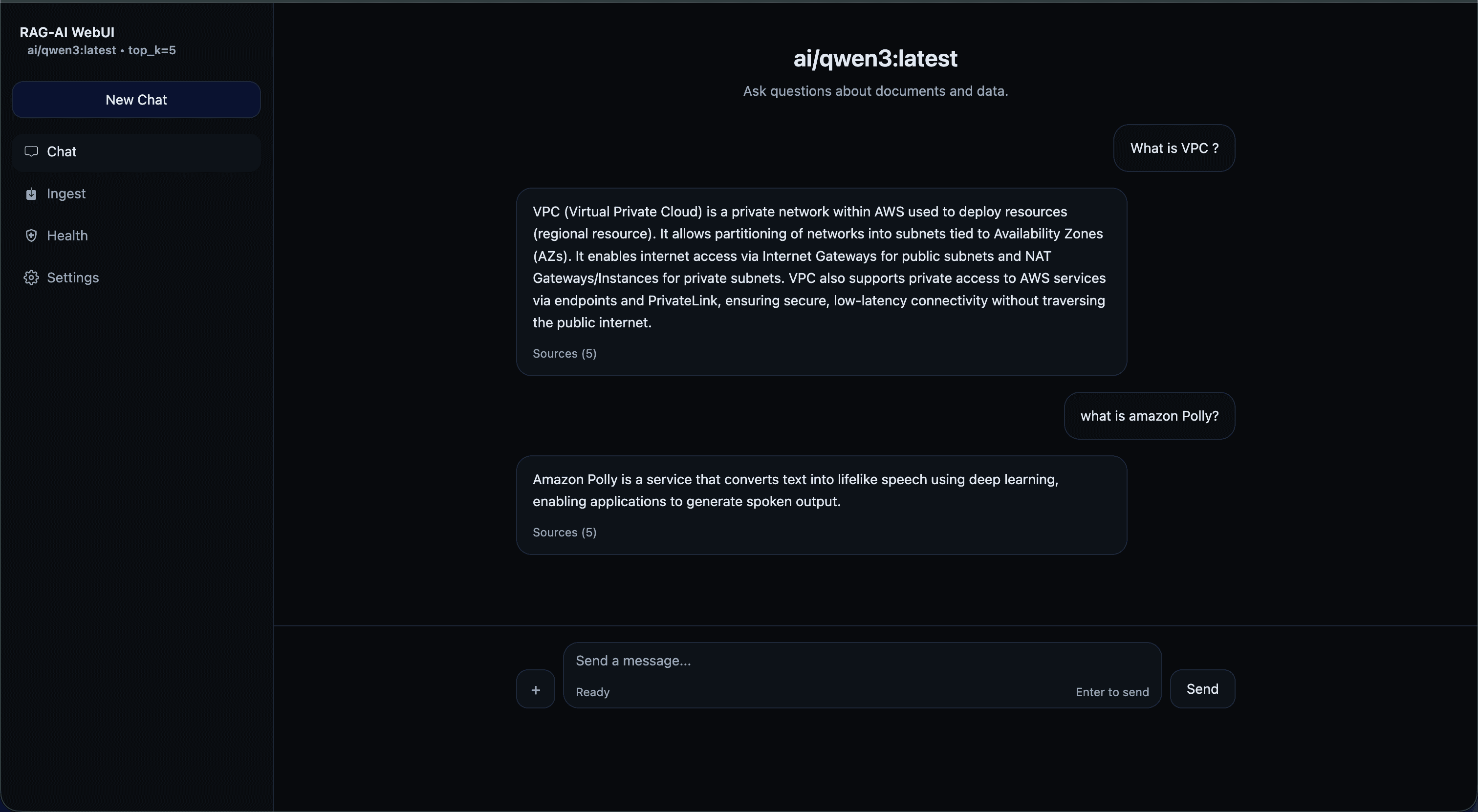

Short walkthrough showing ingestion → search → cited answers in real-time.